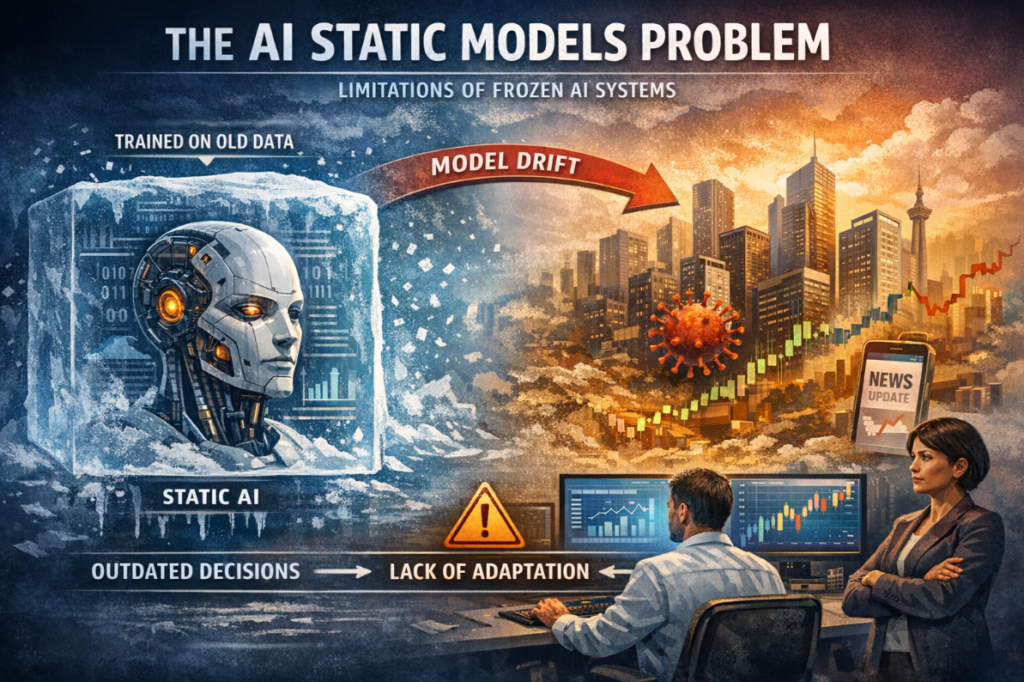

Artificial intelligence has transformed industries ranging from healthcare and finance to education and digital marketing. Yet behind impressive achievements lies a fundamental challenge that experts increasingly recognize as a major bottleneck in AI progress. This challenge is widely known as the ai static models problem. While AI systems often appear intelligent and adaptive, many are built on static foundations that limit their long-term effectiveness, reliability, and trustworthiness.

The ai static models problem refers to the inability of many AI systems to update their understanding of the world after deployment. Once trained and released, these models remain largely frozen, relying on outdated data and assumptions. In a world that changes rapidly, this creates serious gaps between AI predictions and real-world realities. Understanding this problem is essential for developers, decision-makers, and users who depend on AI-driven tools every day.

This in-depth guide explores what the ai static models problem is, why it exists, how it affects real-world applications, and what solutions are emerging to overcome it. Drawing on expert insights, industry experience, and current research, this article aims to provide a clear and authoritative perspective that aligns with Google’s helpful content and E-E-A-T principles.

What Is the AI Static Models Problem?

At its core, the ai static models problem describes the limitations of artificial intelligence systems that do not learn or adapt after deployment. These models are trained on historical datasets, optimized during development, and then released into production environments where they operate without continuous learning.

This static nature means the model’s knowledge is fixed at a specific point in time. Any changes in user behavior, market conditions, language usage, regulations, or external environments are not automatically reflected in the model’s decisions. Over time, this gap between training data and real-world conditions grows, reducing accuracy and reliability.

From an expert standpoint, the problem is not that static models are poorly designed. In fact, many are highly sophisticated during training. The issue is that intelligence in dynamic environments requires ongoing adaptation. Without it, even the most advanced AI systems can become obsolete or misleading.

Why Static AI Models Became the Norm

Understanding why the ai static models problem exists requires looking at how AI systems are traditionally built. Training large models is expensive, time-consuming, and computationally intensive. Once a model is trained, freezing it allows companies to control costs, ensure predictable behavior, and meet regulatory or safety requirements.

Static models are also easier to test and validate. When a model does not change, its outputs remain consistent, making it simpler to audit and debug. In high-stakes industries such as healthcare or finance, this predictability is often preferred over constant change.

However, this design choice comes with trade-offs. As environments evolve, static models fail to reflect new patterns. The ai static models problem emerges as a consequence of prioritizing stability over adaptability.

How the AI Static Models Problem Impacts Real-World Applications

The effects of the ai static models problem are not theoretical. They appear daily across industries and platforms that rely on artificial intelligence.

In healthcare, diagnostic models trained on older patient data may struggle to recognize new disease variants or shifts in population health trends. This can lead to misdiagnosis or delayed treatment recommendations.

In finance, static risk assessment models may fail to account for sudden economic changes, new fraud techniques, or evolving consumer behavior. As a result, they can either overestimate or underestimate risk, harming both institutions and customers.

In digital marketing and content recommendation systems, static AI often delivers irrelevant or outdated suggestions. User preferences change rapidly, and models that do not adapt lose engagement and trust.

From practical experience, organizations frequently discover that performance metrics decline months after deployment. This decline is a direct manifestation of the ai static models problem, often referred to as model drift or data drift.

Data Drift and Concept Drift as Key Contributors

Two closely related phenomena intensify the ai static models problem: data drift and concept drift. Data drift occurs when the statistical properties of input data change over time. Concept drift happens when the underlying relationship between inputs and outputs evolves.

For example, language models trained on older text may misinterpret new slang, cultural references, or emerging topics. Fraud detection systems may miss novel attack patterns because criminals adapt faster than static models.

Experts widely agree that these forms of drift are unavoidable in real-world systems. The static nature of many AI deployments means they are inherently vulnerable to such changes, reinforcing the ai static models problem.

Trust, Bias, and Ethical Concerns

Another critical dimension of the ai static models problem involves trust and ethics. Static models often embed historical biases present in their training data. Without ongoing updates or corrective mechanisms, these biases persist and may even worsen over time.

In hiring systems, for instance, a static AI trained on biased historical hiring data may continue to disadvantage certain groups long after societal norms and regulations have changed. In lending or credit scoring, outdated assumptions can lead to unfair decisions.

From an ethical standpoint, the inability to adapt undermines transparency and accountability. Users may assume AI systems are learning and improving, when in reality they are operating on frozen assumptions. This mismatch between perception and reality erodes trust.

Why Retraining Alone Is Not Enough

A common response to the ai static models problem is periodic retraining. While retraining helps, it does not fully solve the issue. Retraining cycles are often infrequent due to cost, infrastructure constraints, and operational risk.

Between retraining intervals, models remain static and vulnerable to drift. Additionally, retraining requires collecting new labeled data, which may be scarce or expensive. There is also the risk of introducing new errors or biases during each retraining cycle.

Experienced practitioners recognize that retraining is a partial mitigation strategy rather than a complete solution to the ai static models problem.

The Business Risks of Ignoring Static Model Limitations

From a business perspective, the ai static models problem introduces hidden risks. Declining model performance can directly affect revenue, customer satisfaction, and brand reputation. In regulated industries, outdated AI decisions may lead to compliance violations and legal consequences.

Organizations that rely heavily on AI-driven automation often assume long-term efficiency gains. However, static models require ongoing monitoring, manual intervention, and frequent updates to maintain acceptable performance. Ignoring these needs can turn AI from an asset into a liability.

Senior leaders and stakeholders increasingly demand evidence that AI systems remain accurate and fair over time. Addressing the ai static models problem is therefore not only a technical issue but a strategic one.

Emerging Solutions to the AI Static Models Problem

Researchers and industry leaders are actively developing approaches to overcome the ai static models problem. One promising direction involves adaptive and continuously learning systems that update their parameters as new data becomes available.

Online learning techniques allow models to adjust incrementally rather than retraining from scratch. These methods help AI systems remain aligned with current data trends while minimizing disruption.

Another approach focuses on human-in-the-loop systems. In these designs, human experts regularly review AI outputs, correct errors, and provide feedback that informs future updates. This combination of automation and human judgment helps maintain accuracy and accountability.

Monitoring and Governance as Essential Components

Effective monitoring plays a crucial role in mitigating the ai static models problem. By tracking performance metrics, input distributions, and outcome fairness over time, organizations can detect drift early and respond proactively.

AI governance frameworks are also gaining importance. These frameworks define processes for model updates, documentation, validation, and ethical review. They ensure that static models do not operate unchecked in dynamic environments.

From an authoritative standpoint, governance is not a barrier to innovation. Instead, it enables responsible AI deployment by acknowledging and managing the limitations of static models.

The Role of Explainability and Transparency

Explainable AI techniques help address some consequences of the ai static models problem by making model behavior more understandable. When stakeholders can see why a model produces certain outputs, it becomes easier to identify when those outputs no longer align with reality.

Transparency also builds trust. Users who understand that an AI system has limitations are more likely to use it appropriately rather than relying on it blindly. Clear communication about model scope, training data, and update frequency is an important best practice.

Industry Examples Highlighting the Problem

Real-world examples illustrate the impact of the ai static models problem. Language translation systems trained on older corpora often struggle with newly coined terms or regional language changes. Navigation systems may provide inefficient routes when urban infrastructure evolves faster than model updates.

In cybersecurity, static threat detection models quickly become ineffective as attackers innovate. This arms race demonstrates why adaptability is critical in adversarial environments.

These examples reinforce a central lesson. Intelligence without adaptation is fragile. Static AI systems perform well only as long as the world remains similar to their training data.

Balancing Stability and Adaptability

One reason the ai static models problem persists is the tension between stability and adaptability. Constantly changing models can introduce unpredictability, while static models risk irrelevance. The challenge lies in finding the right balance.

Hybrid approaches are emerging that combine stable core models with adaptive layers. The core handles fundamental patterns, while adaptive components respond to recent data. This architecture preserves reliability while improving responsiveness.

Experts view this balance as a key design principle for next-generation AI systems.

Future Outlook for AI Beyond Static Models

The future of artificial intelligence depends heavily on addressing the ai static models problem. As AI systems become more integrated into critical decision-making, their ability to adapt responsibly will define their long-term value.

Research into continual learning, federated learning, and self-supervised methods shows promise. These techniques aim to reduce reliance on static datasets and enable models to evolve alongside their environments.

From a trust and authority perspective, organizations that invest in adaptive AI will likely outperform those that rely on static deployments. Users, regulators, and partners increasingly expect AI systems to remain relevant, fair, and accurate over time.

Conclusion

The ai static models problem represents one of the most important challenges in modern artificial intelligence. Static models, while powerful at launch, struggle to keep pace with a changing world. This limitation affects accuracy, fairness, trust, and business outcomes across industries.

By understanding the roots of this problem and embracing adaptive strategies, organizations can build AI systems that remain effective long after deployment. Continuous monitoring, governance, human oversight, and emerging learning techniques all play essential roles.

Ultimately, solving the ai static models problem is not about abandoning stability. It is about designing intelligence that respects the dynamic nature of reality. As AI continues to shape society, addressing this challenge will be critical to ensuring that technology serves people responsibly and effectively.

FAQs

What does the ai static models problem mean in simple terms?

The ai static models problem means that many AI systems stop learning once they are deployed. They rely on old data and assumptions, which makes them less accurate as the world changes.

Why are static AI models still widely used?

Static models are easier to test, control, and regulate. They offer predictable behavior, which is important in sensitive industries, even though they have long-term limitations.

Can retraining fully fix the ai static models problem?

Retraining helps but does not completely solve the problem. Models remain static between retraining cycles and can still fall behind real-world changes.

How does the ai static models problem affect trust in AI?

When AI systems give outdated or biased results, users lose confidence. Transparency and adaptability are key to maintaining long-term trust.

Are adaptive AI models the future?

Many experts believe adaptive and continuously learning systems are the future. They offer a way to balance reliability with responsiveness in changing environments.

For more updates visit: The BeeBom

Pingback: Understanding 12pvoes Meaning, Context, and Digital Significance - The BeeBom

Pingback: When Did Newport News Clothing Go Out of Business? - The BeeBom

Pingback: Eurogamersonline.com PC Gaming A Comprehensive Guide to Reviews, Technology, and Community - The BeeBom

Pingback: 7ME3745-2KF10-1BB5-Z K21 S30 Camera Guide